When Done Isn't Done

A Standard Definition Isn't Your Goal

Every team (no matter if Scrum or some hybrid) I've worked with had a Definition of Done.

Most of them couldn't tell me what it actually defined.

That's not a criticism of the teams. It's a symptom. The Definition of Done, as it's commonly practiced, has drifted far from what it was supposed to do. And the gap between what it is and what teams need it to be is where a lot of "but we said it was done" conversations live.

In most organizations I've seen, the Definition of Done looks something like this:

code reviewed

unit tests passing

BA/IT/QA test completed

live test completed

documentation updated

deployed to staging

So, in short: a quality gate. A process checklist. Something that applies equally to every ticket, every sprint (a goal delivered in a certain time frame) and every team.

And that's fine. Those things matter. You want your code reviewed. You want tests to pass. Nobody's arguing against quality standards!

But here's what happened along the way: that checklist became the entire answer to "when are we done?". The DoD stopped being about the goal and became about the process. It tells you whether the steps were followed. It doesn't tell you whether the result is what was agreed upon.

Think about it from the customer's perspective. The customer (probably) doesn't care that your code passed a QA test. The customer cares whether the thing they were promised is there, works, and does what was discussed. "Is it done?" from a customer's mouth means something completely different than "is it done?" from a process checklist.

The checklist answers: "Did we follow our required steps?"

The customer asks: "Can I properly use it now?"

In a lot of organizations, only the first one has a formal answer.

The Holy Sprint Goal

In Scrum, the Sprint Goal is meant to be that singular focus. One goal per sprint. The team commits to it, works toward it, and at the end of the sprint, the question is clear: did we achieve this goal, yes or no?

The Definition of Done then serves as the quality standard that the increment needs to meet.

In theory, this works. The Sprint Goal answers: "What is the goal in this time frame we can work on, that serves our customer?" The DoD defines the quality bar that outcome needs to clear. Two layers, complementary, clean.

But even when this works perfectly, there's something people seem to overlook: the Sprint Goal's DoD is not the same as the entire project's DoD. The sprint delivers a piece of something larger. The initiative, the project, the product, whatever sits above the sprint for you, has its own definition of "finished." A completely different one. The sprint might deliver increment 4 of 12. Increment 4 is done. The project isn't! And the project's "done" looks nothing like the sprint's "done." Different scope, different stakeholders, different acceptance.

So even in the best case, with a clean Sprint Goal, someone still needs to answer the bigger question: when is the whole thing actually finished? When does the customer get what was discussed and promised? That answer doesn't live at the sprint level. It never did.

Now take that complexity and remove the one thing that made it manageable: the single Sprint Goal.

In most organizations I've worked with, that condition doesn't exist. There isn't one goal per sprint (or just general delivery cycle). There are five. Or ten. Or the sprint doesn't have a real goal at all, just a collection of tickets pulled from different backlogs serving different stakeholders with different expectations, because the team works as a support team. The Sprint Goal, if it exists, is often a sentence so vague it could apply to any two weeks of work: "Make progress on current priorities or daily business work". Most certainly it has value, but when are you ever done?

And here's the irony. The typical advice is: "Then practice Sprint Goal definition with your team." Great. But what does that actually mean when there are multiple goals? It means defining what "done" looks like for each of them. That's not Sprint Goal practice. That's the Definition of Done conversation, just nobody calls it that. The Scrum Guide doesn't need to address this, because in its model there's one goal and one DoD for quality. But the moment goals become plural, you need a "definition of finished" for every single one of them. And that's exactly the conversation most organizations skip.

The moment you lose the singular goal, the DoD is left holding a bag it was never designed to carry. A generic quality checklist can't distinguish between ten different goals. It can tell you all tickets passed their tests. It can't tell you whether initiative #3, the one the customer is actually waiting for, is truly finished.

My Done Is Not Your Done

This connects directly to something broader. When the system runs ten goals in parallel without a clear single focus, the DoD quietly shifts from being a completion criterion to being a progress indicator. "Done" stops meaning "finished, shippable, nothing left to do" and starts meaning "this ticket moved to the last defined column."

And that's a problem, because everyone uses the same word but means different things.

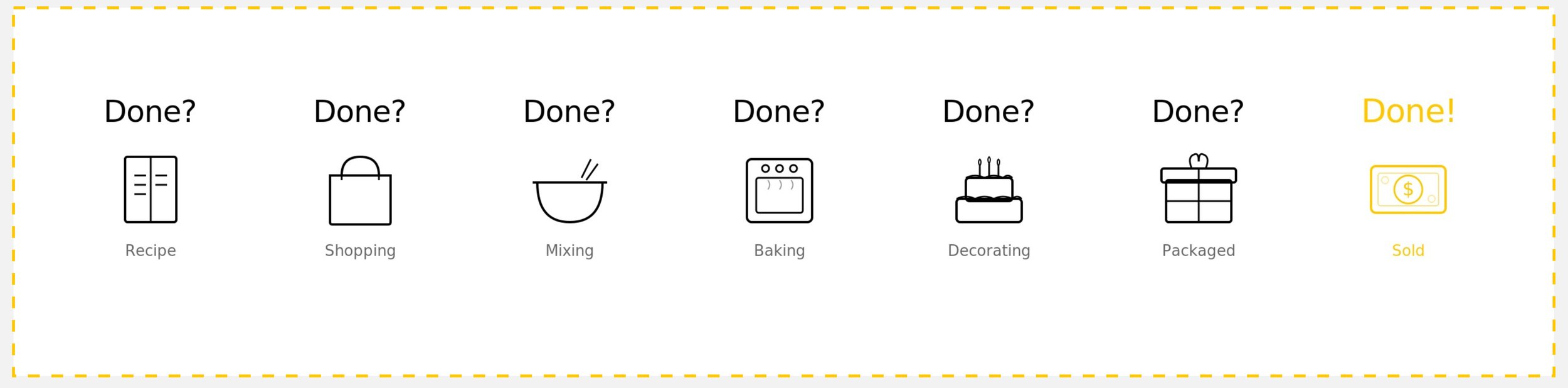

— every step feels finished, only one is done

The developer says "done" and means "my ticket passed the requirement checklist."

The product owner says "done" and means "the feature is complete."

The stakeholder says "done" and means "this is what we discussed, right?"

The customer says "done" and means "I can use/buy this now!"

Nobody is wrong. They're just answering different questions. And the system doesn't clarify which question matters, because the only formal definition is the quality checklist, the so-called "DoD".

This plays out in real time, everywhere. Multiple teams mark all their tickets as "done". The boards look green. The sprints were "successful." And then the stakeholder reviews the delivery and says: "But where's the thing I asked for?" It's technically there, scattered across eight tickets that all passed their tests individually. But who assembled the picture? Who checked whether the pieces, taken together, form the result that was agreed upon? Because no definition in the system actually defined it.

What DoD Was Supposed to Be

Here's where the conversation needs to shift. The Definition of Done isn't supposed to be "our standard quality requirements." That's what acceptance criteria are for. ACC, in its truest sense, is: these are the criteria by which we accept the work. And yes, that includes your standard quality bar. IT test, QA test, documentation, code review, all of that belongs in the acceptance criteria. Those are the conditions that every piece of work needs to meet. The baseline. The non-negotiables.

Now, some of you are probably thinking: wait a minute... The Scrum Guide defines the Definition of Done as "a formal description of the state of the Increment when it meets the quality measures required for the product." So by the book, DoD is a quality standard. And that works, genuinely, as long as there's a single Sprint Goal that defines the outcome. The Sprint Goal says what "done" means in terms of result, the DoD ensures the quality bar is met. Clean setup.

And yes, the obvious counter-argument is: then fix the system. Make sure the team has one Sprint Goal. Get the organization to commit to that if they want Scrum. That's the correct answer in theory. In practice, it's often simply not possible. Not every team builds toward a single deliverable. Not every organization can restructure around clean Sprint Goals. And telling a support team to "just have one Sprint Goal" when their entire purpose is serving five other teams' increments isn't coaching. It's wishful thinking.

And here's the deeper point: many organizations believe the Sprint Goal is their Definition of Done conversation. That Scrum, by requiring a Sprint Goal, solves the problem of defining what "finished" means. It doesn't. What Scrum does is create a space where that conversation is forced to happen. Hence why many companies are so eager to implement Scrum, why it feels amazing for the start. The Sprint Goal demands clarity about the outcome for this sprint. That's valuable. But it's a container, not the conversation itself. And the moment the container breaks, the moment there's no real Sprint Goal, the conversation doesn't move somewhere else. It just stops happening entirely. Because without Scrum with its rule of a single clear goal definition demanding it, it just makes clear: most organizations never had that conversation in the first place. And that's the actual issue.

The Scrum Guide isn't wrong. It describes a world where the Sprint Goal exists and does its job. In the world where it doesn't, what the Scrum Guide calls "Definition of Done" is, in practice, what most people would call acceptance criteria. The quality bar. The process standard. And that leaves a gap: who defines the actual outcome? Who writes down what "finished" looks like in terms of the result, not the process?

So yes, what I'm suggesting here deviates from the Scrum Guide's definition. The Scrum Guide treats DoD as a quality measure. I'm arguing it should be more than that, or rather: that what the Scrum Guide calls DoD is what most organizations should simply call acceptance criteria, and reserve "Definition of Done" for the thing that's actually missing: the final outcome.

The Definition of Done, the way it should work, is something else entirely. It's the answer to: "What is the final result? What does 'finished' actually look like? At what point do we say: this is it! Nothing more to do, the goal is reached!"

That's not a checklist. That's a conversation about the outcome. And it's a conversation that needs to happen per goal, not once for the whole team or once for the entire necessary increments.

The Discussion That Needs to Move

If there's no single Sprint Goal, the question "what does done look like for this goal?" can't be answered at the sprint level. It has to move back to where the goal was defined in the first place and to the right people.

That might be quarterly planning. It might be initiative planning with the Product Owner. It might be when the increment was scoped. Wherever the goal was born, that's where its Definition of Done needs to be born too. Not as a quality checklist, but as a clear statement: this is the final result. This is what the customer will recognize as the finished product. This is the point where we stop.

For an initiative: "When is this initiative truly done? What's the MSP (Minimum Shippable Product) at the end?"

For an increment, slice, a Sprint Goal: "When is this deliverable complete? What's the MVP (Minimum Viable Product) the customer receives?"

For an individual effort ticket: "What is the one result this ticket delivers?"

And notice how the thinking shifts between levels. A sprint goal lives in MVP territory: what's the minimum we can deliver that has value? An initiative or project lives in MSP territory: what's the minimum we can actually ship as a finished product? Those are fundamentally different conversations, and they need fundamentally different definitions of "done."

Each level has its own DoD. Not the same generic checklist copy-pasted across levels, but a real, specific answer to "what does finished look like right here?" In a previous entry, the argument was that your work management system needs to mirror the actual complexity of your work. This is the complement: each level of that hierarchy needs its own definition of what "done" means at that level.

The acceptance criteria then do what they were always meant to do: define the quality bar. The requirements. The conditions for acceptance. Including all those standard things like testing, documentation, and review. ACC answers "in which quality should this be?" while the DoD answers "do we agree this is the final result?"

Are You Done Yet?

The objection is predictable: "This is just semantics. DoD vs. ACC, who cares what you call it?" Fair enough. It's not about naming. But when DoD is just a quality checklist, the question "are we done?" gets answered by process. Check the boxes, move the ticket. The actual goal, if it was ever clearly stated, lives in someone's head or in a slide deck from three months ago. Not connected to the work system.

— Konrad Lorenz

When DoD is a real definition of the final result, connected to the goal at the level where it lives, something changes. The team can trace their work upward: this ticket serves this goal, which delivers a step in a bigger goal. When all the pieces are there, the whole thing is done. Really done. Customer-recognizable done. Done-Done. And "done" means the same thing to everyone, not because you forced alignment through a meeting, but because the system itself carries the answer.

Take a look at your current Definition of Done. Does it tell you when the goal is reached? Or when the process was followed?

If it's the second one, your quality standards are probably fine. But you might be missing the part where someone defines what "finished" actually looks like. The part where the customer points at the result and says: "Yes. That's what we discussed!". If that definition doesn't live in your system, it lives in people's heads. And heads forget, interpret, and disagree.

Before you start working on something, agree on what the end looks like. Not the process to get there. The end itself. Write it down where the work lives.

Done should mean done. Everything else is just acceptance criteria.

If you are also done with being unsure when you're finished — book a free consultation call: